KSampleHHG¶

-

class

hyppo.ksample.KSampleHHG(compute_distance='euclidean', **kwargs)¶ HHG 2-Sample test statistic.

This is a 2-sample multivariate test based on univariate test statistics. It inherits the computational complexity from the unvariate tests to achieve faster speeds than classic multivariate tests. The univariate test used is the Kolmogorov-Smirnov 2-sample test. 1.

- Parameters

- compute_distance (

str,callable, orNone, default:"euclidean") -- A function that computes the distance among the samples within each data matrix. Valid strings for

compute_distanceare, as defined insklearn.metrics.pairwise_distances,From scikit-learn: [

"euclidean","cityblock","cosine","l1","l2","manhattan"] See the documentation forscipy.spatial.distancefor details on these metrics.From scipy.spatial.distance: [

"braycurtis","canberra","chebyshev","correlation","dice","hamming","jaccard","kulsinski","mahalanobis","minkowski","rogerstanimoto","russellrao","seuclidean","sokalmichener","sokalsneath","sqeuclidean","yule"] See the documentation forscipy.spatial.distancefor details on these metrics.

Set to

Noneor"precomputed"ifxandyare already distance matrices. To call a custom function, either create the distance matrix before-hand or create a function of the formmetric(x, **kwargs)wherexis the data matrix for which pairwise distances are calculated and**kwargsare extra arguements to send to your custom- **kwargs

Arbitrary keyword arguments for

compute_distance.

- compute_distance (

Notes

The statistic can be derived as follows: 1.

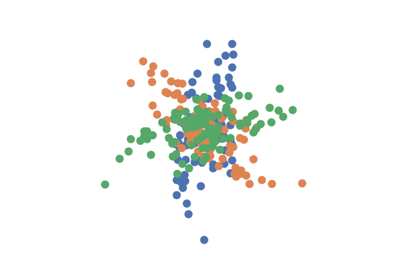

Let \(x\), \(y\) be \((n, p)\), \((m, p)\) samples of random variables \(X\) and \(Y \in \R^p\) . Let there be a center point \(\in \R^p\). For every sample \(i\), calculate the distances from the center point in \(x\) and \(y\) and denote this as \(d_x(x_i)\) and \(d_y(y_i)\). This will create a 1D collection of distances for each sample group.

Then apply the KS 2-sample test on these center-point distances. This classic test compares the empirical distribution function of the two samples and takes the supremum of the difference between them. See Notes under scipy.stats.ks_2samp for more details.

To achieve better power, the above process is repeated with each sample point \(x_i\) and \(y_i\) as center points. The resultant \(n+m\) p-values are then pooled for use in the Bonferroni test of the global null hypothesis. The HHG statistic is the KS stat associated with the smallest p-value from the pool, while the HHG p-value is the smallest p-value multipled by the number of sample points.

References

- 1(1,2)

Ruth Heller and Yair Heller. Multivariate tests of association based on univariate tests. In D. Lee, M. Sugiyama, U. Luxburg, I. Guyon, and R. Garnett, editors, Advances in Neural Information Processing Systems, volume 29. Curran Associates, Inc., 2016. URL: https://proceedings.neurips.cc/paper/2016/file/7ef605fc8dba5425d6965fbd4c8fbe1f-Paper.pdf.

Methods Summary

|

Calculates K-Sample HHG test statistic. |

|

Calculates K-Sample HHG test statistic and p-value. |

-

KSampleHHG.statistic(x, y)¶ Calculates K-Sample HHG test statistic.

- Parameters

x,y (

ndarrayoffloat) -- Input data matrices.xandymust have the same number of dimensions. That is, the shapes must be(n, p)and(m, p)where n and are the number of samples and p is the number of dimensions.- Returns

stat (

float) -- The computed KS test statistic associated with the lowest p-value.

-

KSampleHHG.test(x, y)¶ Calculates K-Sample HHG test statistic and p-value.